- Release Notes and Announcements

- Release Notes

- Announcements

- Notification on Service Suspension Policy Change in Case of Overdue Payment for COS Pay-As-You-Go (Postpaid)

- Implementation Notice for Security Management of COS Bucket Domain (Effective January 2024)

- Notification of Price Reduction for COS Retrieval and Storage Capacity Charges

- Daily Billing for COS Storage Usage, Request, and Data Retrieval

- COS Will Stop Supporting New Default CDN Acceleration Domains

- Product Introduction

- Purchase Guide

- Getting Started

- Console Guide

- Console Overview

- Bucket Management

- Bucket Overview

- Creating Bucket

- Deleting Buckets

- Querying Bucket

- Clearing Bucket

- Setting Access Permission

- Setting Bucket Encryption

- Setting Hotlink Protection

- Setting Origin-Pull

- Setting Cross-Origin Resource Sharing (CORS)

- Setting Versioning

- Setting Static Website

- Setting Lifecycle

- Setting Logging

- Accessing Bucket List Using Sub-Account

- Adding Bucket Policies

- Setting Log Analysis

- Setting INTELLIGENT TIERING

- Setting Inventory

- Domain Name Management

- Setting Bucket Tags

- Setting Log Retrieval

- Setting Cross-Bucket Replication

- Enabling Global Acceleration

- Setting Object Lock

- Object Management

- Uploading an Object

- Downloading Objects

- Copying Object

- Previewing or Editing Object

- Viewing Object Information

- Searching for Objects

- Sorting and Filtering Objects

- Direct Upload to ARCHIVE

- Modifying Storage Class

- Deleting Incomplete Multipart Uploads

- Setting Object Access Permission

- Setting Object Encryption

- Custom Headers

- Deleting Objects

- Restoring Archived Objects

- Folder Management

- Data Extraction

- Setting Object Tag

- Exporting Object URLs

- Restoring Historical Object Version

- Batch Operation

- Monitoring Reports

- Data Processing

- Content Moderation

- Smart Toolbox User Guide

- Data Processing Workflow

- Application Integration

- User Tools

- Tool Overview

- Installation and Configuration of Environment

- COSBrowser

- COSCLI (Beta)

- COSCLI Overview

- Download and Installation Configuration

- Common Options

- Common Commands

- Generating and Modifying Configuration Files - config

- Creating Buckets - mb

- Deleting Buckets - rb

- Tagging Bucket - bucket-tagging

- Querying Bucket/Object List - ls

- Obtaining Statistics on Different Types of Objects - du

- Uploading/Downloading/Copying Objects - cp

- Syncing Upload/Download/Copy - sync

- Deleting Objects - rm

- Getting File Hash Value - hash

- Listing Incomplete Multipart Uploads - lsparts

- Clearing Incomplete Multipart Uploads - abort

- Retrieving Archived Files - restore

- Creating/Obtaining a Symbolic Link - symlink

- Viewing Contents of an Object - cat

- Getting Pre-signed URL - signurl

- Listing Contents and Statistics Under a Directory - lsdu

- FAQs

- COSCMD

- COS Migration

- FTP Server

- Hadoop

- COSDistCp

- Hadoop-cos-DistChecker

- HDFS TO COS

- Online Auxiliary Tools

- Diagnostic Tool

- Practical Tutorial

- Overview

- Access Control and Permission Management

- ACL Practices

- CAM Practices

- Granting Sub-Accounts Access to COS

- Authorization Cases

- Working with COS API Authorization Policies

- Security Guidelines for Using Temporary Credentials for Direct Upload from Frontend to COS

- Generating and Using Temporary Keys

- Authorizing Sub-Account to Get Buckets by Tag

- Descriptions and Use Cases of Condition Keys

- Granting Bucket Permissions to a Sub-Account that is Under Another Root Account

- Performance Optimization

- Data Migration

- Accessing COS with AWS S3 SDK

- Data Disaster Recovery and Backup

- Domain Name Management Practice

- Image Processing

- Audio/Video Practices

- Workflow

- Direct Data Upload

- Content Moderation

- Data Security

- Data Verification

- Big Data Practice

- Using COS in the Third-party Applications

- Use the general configuration of COS in third-party applications compatible with S3

- Storing Remote WordPress Attachments to COS

- Storing Ghost Attachment to COS

- Backing up Files from PC to COS

- Using Nextcloud and COS to Build Personal Online File Storage Service

- Mounting COS to Windows Server as Local Drive

- Setting up Image Hosting Service with PicGo, Typora, and COS

- Managing COS Resource with CloudBerry Explorer

- Developer Guide

- Creating Request

- Bucket

- Object

- Data Management

- Data Disaster Recovery

- Data Security

- Cloud Access Management

- Batch Operation

- Global Acceleration

- Data Workflow

- Monitoring and Alarms

- Data Lake Storage

- Cloud Native Datalake Storage

- Metadata Accelerator

- Metadata Acceleration Overview

- Migrating HDFS Data to Metadata Acceleration-Enabled Bucket

- Using HDFS to Access Metadata Acceleration-Enabled Bucket

- Mounting a COS Bucket in a Computing Cluster

- Accessing COS over HDFS in CDH Cluster

- Using Hadoop FileSystem API Code to Access COS Metadata Acceleration Bucket

- Using DataX to Sync Data Between Buckets with Metadata Acceleration Enabled

- Big Data Security

- GooseFS

- Data Processing

- Troubleshooting

- API Documentation

- Introduction

- Common Request Headers

- Common Response Headers

- Error Codes

- Request Signature

- Action List

- Service APIs

- Bucket APIs

- Basic Operations

- Access Control List (acl)

- Cross-Origin Resource Sharing (cors)

- Lifecycle

- Bucket Policy (policy)

- Hotlink Protection (referer)

- Tag (tagging)

- Static Website (website)

- Intelligent Tiering

- Bucket inventory(inventory)

- Versioning

- Cross-Bucket Replication(replication)

- Log Management(logging)

- Global Acceleration (Accelerate)

- Bucket Encryption (encryption)

- Custom Domain Name (Domain)

- Origin-Pull (Origin)

- Object APIs

- Batch Operation APIs

- Data Processing APIs

- Image Processing

- Basic Image Processing

- Scaling

- Cropping

- Rotation

- Converting Format

- Quality Change

- Gaussian Blurring

- Adjusting Brightness

- Adjusting Contrast

- Sharpening

- Grayscale Image

- Image Watermark

- Text Watermark

- Obtaining Basic Image Information

- Getting Image EXIF

- Obtaining Image’s Average Hue

- Metadata Removal

- Quick Thumbnail Template

- Limiting Output Image Size

- Pipeline Operators

- Image Advanced Compression

- Persistent Image Processing

- Image Compression

- Blind Watermark

- Basic Image Processing

- AI-Based Content Recognition

- Media Processing

- File Processing

- File Processing

- Image Processing

- Job and Workflow

- Common Request Headers

- Common Response Headers

- Error Codes

- Workflow APIs

- Workflow Instance

- Job APIs

- Media Processing

- Canceling Media Processing Job

- Querying Media Processing Job

- Media Processing Job Callback

- Video-to-Animated Image Conversion

- Audio/Video Splicing

- Adding Digital Watermark

- Extracting Digital Watermark

- Getting Media Information

- Noise Cancellation

- Video Quality Scoring

- SDRtoHDR

- Remuxing (Audio/Video Segmentation)

- Intelligent Thumbnail

- Frame Capturing

- Stream Separation

- Super Resolution

- Audio/Video Transcoding

- Text to Speech

- Video Montage

- Video Enhancement

- Video Tagging

- Voice/Sound Separation

- Image Processing

- Multi-Job Processing

- AI-Based Content Recognition

- Sync Media Processing

- Media Processing

- Template APIs

- Media Processing

- Creating Media Processing Template

- Creating Animated Image Template

- Creating Splicing Template

- Creating Top Speed Codec Transcoding Template

- Creating Screenshot Template

- Creating Super Resolution Template

- Creating Audio/Video Transcoding Template

- Creating Professional Transcoding Template

- Creating Text-to-Speech Template

- Creating Video Montage Template

- Creating Video Enhancement Template

- Creating Voice/Sound Separation Template

- Creating Watermark Template

- Creating Intelligent Thumbnail Template

- Deleting Media Processing Template

- Querying Media Processing Template

- Updating Media Processing Template

- Updating Animated Image Template

- Updating Splicing Template

- Updating Top Speed Codec Transcoding Template

- Updating Screenshot Template

- Updating Super Resolution Template

- Updating Audio/Video Transcoding Template

- Updating Professional Transcoding Template

- Updating Text-to-Speech Template

- Updating Video Montage Template

- Updating Video Enhancement Template

- Updating Voice/Sound Separation Template

- Updating Watermark Template

- Updating Intelligent Thumbnail Template

- Creating Media Processing Template

- AI-Based Content Recognition

- Media Processing

- Batch Job APIs

- Callback Content

- Appendix

- Content Moderation APIs

- Submitting Virus Detection Job

- SDK Documentation

- SDK Overview

- Preparations

- Android SDK

- Getting Started

- Android SDK FAQs

- Quick Experience

- Bucket Operations

- Object Operations

- Uploading an Object

- Downloading Objects

- Copying and Moving Objects

- Listing Objects

- Deleting Objects

- Restoring Archived Objects

- Querying Object Metadata

- Generating Pre-Signed URLs

- Configuring Preflight Requests for Cross-origin Access

- Server-Side Encryption

- Single-Connection Bandwidth Limit

- Extracting Object Content

- Remote Disaster Recovery

- Data Management

- Cloud Access Management

- Data Verification

- Image Processing

- Setting Custom Headers

- Setting Access Domain Names (CDN/Global Acceleration)

- Troubleshooting

- C SDK

- C++ SDK

- .NET(C#) SDK

- Getting Started

- .NET (C#) SDK

- Bucket Operations

- Object Operations

- Uploading Objects

- Downloading Objects

- Copying and Moving Objects

- Listing Objects

- Deleting Objects

- Checking Whether Objects Exist

- Restoring Archived Objects

- Querying Object Metadata

- Object Access URL

- Getting Pre-Signed URLs

- Configuring Preflight Requests for Cross-Origin Access

- Server-Side Encryption

- Single-URL Speed Limits

- Extracting Object Content

- Cross-Region Disaster Recovery

- Data Management

- Cloud Access Management

- Image Processing

- Content Moderation

- Setting Custom Headers

- Setting Access Domain Names (CDN/Global Acceleration)

- Troubleshooting

- Backward Compatibility

- SDK for Flutter

- Go SDK

- iOS SDK

- Getting Started

- iOS SDK

- Quick Experience

- Bucket Operations

- Object Operations

- Uploading Objects

- Downloading Objects

- Listing Objects

- Copying and Moving Objects

- Extracting Object Content

- Checking Whether an Object Exists

- Deleting Objects

- Restoring Archived Objects

- Querying Object Metadata

- Server-Side Encryption

- Object Access URL

- Generating Pre-Signed URL

- Configuring CORS Preflight Requests

- Cross-region Disaster Recovery

- Data Management

- Cloud Access Management

- Image Processing

- Content Recognition

- Setting Custom Headers

- Setting Access Domain Names (CDN/Global Acceleration)

- Troubleshooting

- Java SDK

- Getting Started

- FAQs

- Bucket Operations

- Object Operations

- Uploading Object

- Downloading Objects

- Copying and Moving Objects

- Listing Objects

- Deleting Objects

- Checking Whether Objects Exist

- Querying Object Metadata

- Modifying Object Metadata

- Object Access URL

- Generating Pre-Signed URLs

- Restoring Archived Objects

- Server-Side Encryption

- Client-Side Encryption

- Single-URL Speed Limits

- Extracting Object Content

- Uploading/Downloading Object at Custom Domain Name

- Data Management

- Cross-Region Disaster Recovery

- Cloud Access Management

- Image Processing

- Content Moderation

- File Processing

- Media Processing

- AI-Based Content Recognition

- Troubleshooting

- Setting Access Domain Names (CDN/Global Acceleration)

- JavaScript SDK

- Node.js SDK

- PHP SDK

- Python SDK

- Getting Started

- Python SDK FAQs

- Bucket Operations

- Object Operations

- Uploading Objects

- Downloading Objects

- Copying and Moving Objects

- Listing Objects

- Deleting Objects

- Checking Whether Objects Exist

- Querying Object Metadata

- Modifying Object Metadata

- Object Access URL

- Getting Pre-Signed URLs

- Restoring Archived Objects

- Extracting Object Content

- Server-Side Encryption

- Client-Side Encryption

- Single-URL Speed Limits

- Cross-Region Disaster Recovery

- Data Management

- Cloud Access Management

- Content Recognition

- Setting Access Domain Names (CDN/Global Acceleration)

- Troubleshooting

- Image Processing

- React Native SDK

- Mini Program SDK

- Getting Started

- FAQs

- Bucket Operations

- Object Operations

- Uploading an Object

- Downloading Objects

- Listing Objects

- Deleting Objects

- Copying and Moving Objects

- Restoring Archived Objects

- Querying Object Metadata

- Checking Whether an Object Exists

- Object Access URL

- Generating Pre-Signed URL

- Configuring CORS Preflight Requests

- Single-URL Speed Limits

- Server-Side Encryption

- Remote disaster-tolerant

- Data Management

- Cloud Access Management

- Data Verification

- Content Moderation

- Setting Access Domain Names (CDN/Global Acceleration)

- Image Processing

- Troubleshooting

- Error Codes

- FAQs

- Related Agreements

- Appendices

- Glossary

- Release Notes and Announcements

- Release Notes

- Announcements

- Notification on Service Suspension Policy Change in Case of Overdue Payment for COS Pay-As-You-Go (Postpaid)

- Implementation Notice for Security Management of COS Bucket Domain (Effective January 2024)

- Notification of Price Reduction for COS Retrieval and Storage Capacity Charges

- Daily Billing for COS Storage Usage, Request, and Data Retrieval

- COS Will Stop Supporting New Default CDN Acceleration Domains

- Product Introduction

- Purchase Guide

- Getting Started

- Console Guide

- Console Overview

- Bucket Management

- Bucket Overview

- Creating Bucket

- Deleting Buckets

- Querying Bucket

- Clearing Bucket

- Setting Access Permission

- Setting Bucket Encryption

- Setting Hotlink Protection

- Setting Origin-Pull

- Setting Cross-Origin Resource Sharing (CORS)

- Setting Versioning

- Setting Static Website

- Setting Lifecycle

- Setting Logging

- Accessing Bucket List Using Sub-Account

- Adding Bucket Policies

- Setting Log Analysis

- Setting INTELLIGENT TIERING

- Setting Inventory

- Domain Name Management

- Setting Bucket Tags

- Setting Log Retrieval

- Setting Cross-Bucket Replication

- Enabling Global Acceleration

- Setting Object Lock

- Object Management

- Uploading an Object

- Downloading Objects

- Copying Object

- Previewing or Editing Object

- Viewing Object Information

- Searching for Objects

- Sorting and Filtering Objects

- Direct Upload to ARCHIVE

- Modifying Storage Class

- Deleting Incomplete Multipart Uploads

- Setting Object Access Permission

- Setting Object Encryption

- Custom Headers

- Deleting Objects

- Restoring Archived Objects

- Folder Management

- Data Extraction

- Setting Object Tag

- Exporting Object URLs

- Restoring Historical Object Version

- Batch Operation

- Monitoring Reports

- Data Processing

- Content Moderation

- Smart Toolbox User Guide

- Data Processing Workflow

- Application Integration

- User Tools

- Tool Overview

- Installation and Configuration of Environment

- COSBrowser

- COSCLI (Beta)

- COSCLI Overview

- Download and Installation Configuration

- Common Options

- Common Commands

- Generating and Modifying Configuration Files - config

- Creating Buckets - mb

- Deleting Buckets - rb

- Tagging Bucket - bucket-tagging

- Querying Bucket/Object List - ls

- Obtaining Statistics on Different Types of Objects - du

- Uploading/Downloading/Copying Objects - cp

- Syncing Upload/Download/Copy - sync

- Deleting Objects - rm

- Getting File Hash Value - hash

- Listing Incomplete Multipart Uploads - lsparts

- Clearing Incomplete Multipart Uploads - abort

- Retrieving Archived Files - restore

- Creating/Obtaining a Symbolic Link - symlink

- Viewing Contents of an Object - cat

- Getting Pre-signed URL - signurl

- Listing Contents and Statistics Under a Directory - lsdu

- FAQs

- COSCMD

- COS Migration

- FTP Server

- Hadoop

- COSDistCp

- Hadoop-cos-DistChecker

- HDFS TO COS

- Online Auxiliary Tools

- Diagnostic Tool

- Practical Tutorial

- Overview

- Access Control and Permission Management

- ACL Practices

- CAM Practices

- Granting Sub-Accounts Access to COS

- Authorization Cases

- Working with COS API Authorization Policies

- Security Guidelines for Using Temporary Credentials for Direct Upload from Frontend to COS

- Generating and Using Temporary Keys

- Authorizing Sub-Account to Get Buckets by Tag

- Descriptions and Use Cases of Condition Keys

- Granting Bucket Permissions to a Sub-Account that is Under Another Root Account

- Performance Optimization

- Data Migration

- Accessing COS with AWS S3 SDK

- Data Disaster Recovery and Backup

- Domain Name Management Practice

- Image Processing

- Audio/Video Practices

- Workflow

- Direct Data Upload

- Content Moderation

- Data Security

- Data Verification

- Big Data Practice

- Using COS in the Third-party Applications

- Use the general configuration of COS in third-party applications compatible with S3

- Storing Remote WordPress Attachments to COS

- Storing Ghost Attachment to COS

- Backing up Files from PC to COS

- Using Nextcloud and COS to Build Personal Online File Storage Service

- Mounting COS to Windows Server as Local Drive

- Setting up Image Hosting Service with PicGo, Typora, and COS

- Managing COS Resource with CloudBerry Explorer

- Developer Guide

- Creating Request

- Bucket

- Object

- Data Management

- Data Disaster Recovery

- Data Security

- Cloud Access Management

- Batch Operation

- Global Acceleration

- Data Workflow

- Monitoring and Alarms

- Data Lake Storage

- Cloud Native Datalake Storage

- Metadata Accelerator

- Metadata Acceleration Overview

- Migrating HDFS Data to Metadata Acceleration-Enabled Bucket

- Using HDFS to Access Metadata Acceleration-Enabled Bucket

- Mounting a COS Bucket in a Computing Cluster

- Accessing COS over HDFS in CDH Cluster

- Using Hadoop FileSystem API Code to Access COS Metadata Acceleration Bucket

- Using DataX to Sync Data Between Buckets with Metadata Acceleration Enabled

- Big Data Security

- GooseFS

- Data Processing

- Troubleshooting

- API Documentation

- Introduction

- Common Request Headers

- Common Response Headers

- Error Codes

- Request Signature

- Action List

- Service APIs

- Bucket APIs

- Basic Operations

- Access Control List (acl)

- Cross-Origin Resource Sharing (cors)

- Lifecycle

- Bucket Policy (policy)

- Hotlink Protection (referer)

- Tag (tagging)

- Static Website (website)

- Intelligent Tiering

- Bucket inventory(inventory)

- Versioning

- Cross-Bucket Replication(replication)

- Log Management(logging)

- Global Acceleration (Accelerate)

- Bucket Encryption (encryption)

- Custom Domain Name (Domain)

- Origin-Pull (Origin)

- Object APIs

- Batch Operation APIs

- Data Processing APIs

- Image Processing

- Basic Image Processing

- Scaling

- Cropping

- Rotation

- Converting Format

- Quality Change

- Gaussian Blurring

- Adjusting Brightness

- Adjusting Contrast

- Sharpening

- Grayscale Image

- Image Watermark

- Text Watermark

- Obtaining Basic Image Information

- Getting Image EXIF

- Obtaining Image’s Average Hue

- Metadata Removal

- Quick Thumbnail Template

- Limiting Output Image Size

- Pipeline Operators

- Image Advanced Compression

- Persistent Image Processing

- Image Compression

- Blind Watermark

- Basic Image Processing

- AI-Based Content Recognition

- Media Processing

- File Processing

- File Processing

- Image Processing

- Job and Workflow

- Common Request Headers

- Common Response Headers

- Error Codes

- Workflow APIs

- Workflow Instance

- Job APIs

- Media Processing

- Canceling Media Processing Job

- Querying Media Processing Job

- Media Processing Job Callback

- Video-to-Animated Image Conversion

- Audio/Video Splicing

- Adding Digital Watermark

- Extracting Digital Watermark

- Getting Media Information

- Noise Cancellation

- Video Quality Scoring

- SDRtoHDR

- Remuxing (Audio/Video Segmentation)

- Intelligent Thumbnail

- Frame Capturing

- Stream Separation

- Super Resolution

- Audio/Video Transcoding

- Text to Speech

- Video Montage

- Video Enhancement

- Video Tagging

- Voice/Sound Separation

- Image Processing

- Multi-Job Processing

- AI-Based Content Recognition

- Sync Media Processing

- Media Processing

- Template APIs

- Media Processing

- Creating Media Processing Template

- Creating Animated Image Template

- Creating Splicing Template

- Creating Top Speed Codec Transcoding Template

- Creating Screenshot Template

- Creating Super Resolution Template

- Creating Audio/Video Transcoding Template

- Creating Professional Transcoding Template

- Creating Text-to-Speech Template

- Creating Video Montage Template

- Creating Video Enhancement Template

- Creating Voice/Sound Separation Template

- Creating Watermark Template

- Creating Intelligent Thumbnail Template

- Deleting Media Processing Template

- Querying Media Processing Template

- Updating Media Processing Template

- Updating Animated Image Template

- Updating Splicing Template

- Updating Top Speed Codec Transcoding Template

- Updating Screenshot Template

- Updating Super Resolution Template

- Updating Audio/Video Transcoding Template

- Updating Professional Transcoding Template

- Updating Text-to-Speech Template

- Updating Video Montage Template

- Updating Video Enhancement Template

- Updating Voice/Sound Separation Template

- Updating Watermark Template

- Updating Intelligent Thumbnail Template

- Creating Media Processing Template

- AI-Based Content Recognition

- Media Processing

- Batch Job APIs

- Callback Content

- Appendix

- Content Moderation APIs

- Submitting Virus Detection Job

- SDK Documentation

- SDK Overview

- Preparations

- Android SDK

- Getting Started

- Android SDK FAQs

- Quick Experience

- Bucket Operations

- Object Operations

- Uploading an Object

- Downloading Objects

- Copying and Moving Objects

- Listing Objects

- Deleting Objects

- Restoring Archived Objects

- Querying Object Metadata

- Generating Pre-Signed URLs

- Configuring Preflight Requests for Cross-origin Access

- Server-Side Encryption

- Single-Connection Bandwidth Limit

- Extracting Object Content

- Remote Disaster Recovery

- Data Management

- Cloud Access Management

- Data Verification

- Image Processing

- Setting Custom Headers

- Setting Access Domain Names (CDN/Global Acceleration)

- Troubleshooting

- C SDK

- C++ SDK

- .NET(C#) SDK

- Getting Started

- .NET (C#) SDK

- Bucket Operations

- Object Operations

- Uploading Objects

- Downloading Objects

- Copying and Moving Objects

- Listing Objects

- Deleting Objects

- Checking Whether Objects Exist

- Restoring Archived Objects

- Querying Object Metadata

- Object Access URL

- Getting Pre-Signed URLs

- Configuring Preflight Requests for Cross-Origin Access

- Server-Side Encryption

- Single-URL Speed Limits

- Extracting Object Content

- Cross-Region Disaster Recovery

- Data Management

- Cloud Access Management

- Image Processing

- Content Moderation

- Setting Custom Headers

- Setting Access Domain Names (CDN/Global Acceleration)

- Troubleshooting

- Backward Compatibility

- SDK for Flutter

- Go SDK

- iOS SDK

- Getting Started

- iOS SDK

- Quick Experience

- Bucket Operations

- Object Operations

- Uploading Objects

- Downloading Objects

- Listing Objects

- Copying and Moving Objects

- Extracting Object Content

- Checking Whether an Object Exists

- Deleting Objects

- Restoring Archived Objects

- Querying Object Metadata

- Server-Side Encryption

- Object Access URL

- Generating Pre-Signed URL

- Configuring CORS Preflight Requests

- Cross-region Disaster Recovery

- Data Management

- Cloud Access Management

- Image Processing

- Content Recognition

- Setting Custom Headers

- Setting Access Domain Names (CDN/Global Acceleration)

- Troubleshooting

- Java SDK

- Getting Started

- FAQs

- Bucket Operations

- Object Operations

- Uploading Object

- Downloading Objects

- Copying and Moving Objects

- Listing Objects

- Deleting Objects

- Checking Whether Objects Exist

- Querying Object Metadata

- Modifying Object Metadata

- Object Access URL

- Generating Pre-Signed URLs

- Restoring Archived Objects

- Server-Side Encryption

- Client-Side Encryption

- Single-URL Speed Limits

- Extracting Object Content

- Uploading/Downloading Object at Custom Domain Name

- Data Management

- Cross-Region Disaster Recovery

- Cloud Access Management

- Image Processing

- Content Moderation

- File Processing

- Media Processing

- AI-Based Content Recognition

- Troubleshooting

- Setting Access Domain Names (CDN/Global Acceleration)

- JavaScript SDK

- Node.js SDK

- PHP SDK

- Python SDK

- Getting Started

- Python SDK FAQs

- Bucket Operations

- Object Operations

- Uploading Objects

- Downloading Objects

- Copying and Moving Objects

- Listing Objects

- Deleting Objects

- Checking Whether Objects Exist

- Querying Object Metadata

- Modifying Object Metadata

- Object Access URL

- Getting Pre-Signed URLs

- Restoring Archived Objects

- Extracting Object Content

- Server-Side Encryption

- Client-Side Encryption

- Single-URL Speed Limits

- Cross-Region Disaster Recovery

- Data Management

- Cloud Access Management

- Content Recognition

- Setting Access Domain Names (CDN/Global Acceleration)

- Troubleshooting

- Image Processing

- React Native SDK

- Mini Program SDK

- Getting Started

- FAQs

- Bucket Operations

- Object Operations

- Uploading an Object

- Downloading Objects

- Listing Objects

- Deleting Objects

- Copying and Moving Objects

- Restoring Archived Objects

- Querying Object Metadata

- Checking Whether an Object Exists

- Object Access URL

- Generating Pre-Signed URL

- Configuring CORS Preflight Requests

- Single-URL Speed Limits

- Server-Side Encryption

- Remote disaster-tolerant

- Data Management

- Cloud Access Management

- Data Verification

- Content Moderation

- Setting Access Domain Names (CDN/Global Acceleration)

- Image Processing

- Troubleshooting

- Error Codes

- FAQs

- Related Agreements

- Appendices

- Glossary

Hadoop (2.7.2 or above) tool provides the capability to run computing tasks using Tencent Cloud COS as the underlying file storage system. The Hadoop cluster can be launched in three modes: stand-alone, pseudo-distributed, and fully-distributed. This document uses Hadoop-2.7.4 as an example to describe how to build a fully-distributed Hadoop environment and how to use wordcount to execute a simple test.

Preparation

1. Prepare several servers.

2. Install and configure the system: CentOS-7-x86_64-DVD-1611.iso.

3. Install the Java environment. For more information, see Installing and Configuring Java.

4. Install the available Hadoop package: Apache Hadoop Releases Download.

Network Configuration

Use

ifconfig -a to check the IP of each server, then use the ping command to check if they can ping each other, and record the IP of each server.Configuring CentOS

Configure hosts

vi /etc/hosts

Edit the content:

202.xxx.xxx.xxx master202.xxx.xxx.xxx slave1202.xxx.xxx.xxx slave2202.xxx.xxx.xxx slave3//Replace IPs with the real ones

Turn off firewall

systemctl status firewalld.service //Check firewall statussystemctl stop firewalld.service //Turn off firewallsystemctl disable firewalld.service //Disable firewall to start on boot

Time synchronization

yum install -y ntp //Install ntp servicentpdate cn.pool.ntp.org //Sync network time

Install and configure JDK

Upload JDK installer package (such as jdk-8u144-linux-x64.tar.gz) to the

root directory.mkdir /usr/javatar -zxvf jdk-8u144-linux-x64.tar.gz -C /usr/java/rm -rf jdk-8u144-linux-x64.tar.gz

Copy JDKs among hosts

scp -r /usr/java slave1:/usrscp -r /usr/java slave2:/usrscp -r /usr/java slave3:/usr.......

Configure environment variables for JDK of each host

vi /etc/profile

Edit the content:

export JAVA_HOME=/usr/java/jdk1.8.0_144export PATH=$JAVA_HOME/bin:$PATHexport CLASSPATH=.:$JAVA_HOME/lib/dt.jar:$JAVA_HOME/lib/tools.jarsource/etc/profile //Make the configuration file take effectjava -version //View Java version

Configuring Keyless Access via SSH

Check the SSH service status on each host:

systemctl status sshd.service //Check the SSH service status.yum install openssh-server openssh-clients //Install the SSH service. Ignore this step if it is already installed.systemctl start sshd.service //Enable the SSH service. Ignore this step if it is already enabled.

Generate a key on each host:

ssh-keygen -t rsa //Generate Keys

On slave1:

cp ~/.ssh/id_rsa.pub ~/.ssh/slave1.id_rsa.pubscp ~/.ssh/slave1.id_rsa.pub master:~/.ssh

On slave2:

cp ~/.ssh/id_rsa.pub ~/.ssh/slave2.id_rsa.pubscp ~/.ssh/slave2.id_rsa.pub master:~/.ssh

And so on...

On master:

cd ~/.sshcat id_rsa.pub >> authorized_keyscat slave1.id_rsa.pub >>authorized_keyscat slave2.id_rsa.pub >>authorized_keysscp authorized_keys slave1:~/.sshscp authorized_keys slave2:~/.sshscp authorized_keys slave3:~/.ssh

Installing and Configuring Hadoop

Installing Hadoop

Upload the Hadoop installer package (such as hadoop-2.7.4.tar.gz) to the

root directory.tar -zxvf hadoop-2.7.4.tar.gz -C /usrrm -rf hadoop-2.7.4.tar.gzmkdir /usr/hadoop-2.7.4/tmpmkdir /usr/hadoop-2.7.4/logsmkdir /usr/hadoop-2.7.4/hdfmkdir /usr/hadoop-2.7.4/hdf/datamkdir /usr/hadoop-2.7.4/hdf/name

Go to the

hadoop-2.7.4/etc/hadoop directory and proceed to the next step.Configure Hadoop

1. Add the following to the hadoop-env.sh file.

export JAVA_HOME=/usr/java/jdk1.8.0_144

If the SSH port is not 22 (default value), modify it in the

hadoop-env.sh file:export HADOOP_SSH_OPTS="-p 1234"

2. Modify yarn-env.sh

export JAVA_HOME=/usr/java/jdk1.8.0_144

3. Modify slaves

Configure the content:

Delete:localhostAdd:slave1slave2slave3

4. Modify core-site.xml

<configuration><property><name>fs.default.name</name><value>hdfs://master:9000</value></property><property><name>hadoop.tmp.dir</name><value>file:/usr/hadoop-2.7.4/tmp</value></property></configuration>

5. Modify hdfs-site.xml

<configuration><property><name>dfs.datanode.data.dir</name><value>/usr/hadoop-2.7.4/hdf/data</value><final>true</final></property><property><name>dfs.namenode.name.dir</name><value>/usr/hadoop-2.7.4/hdf/name</value><final>true</final></property></configuration>

6. Modify mapred-site.xml

<configuration><property><name>mapreduce.framework.name</name><value>yarn</value></property><property><name>mapreduce.jobhistory.address</name><value>master:10020</value></property><property><name>mapreduce.jobhistory.webapp.address</name><value>master:19888</value></property></configuration>

7. Modify yarn-site.xml

<configuration><property><name>yarn.nodemanager.aux-services.mapreduce.shuffle.class</name><value>org.apache.mapred.ShuffleHandler</value></property><property><name>yarn.nodemanager.aux-services</name><value>mapreduce_shuffle</value></property><property><name>yarn.resourcemanager.address</name><value>master:8032</value></property><property><name>yarn.resourcemanager.scheduler.address</name><value>master:8030</value></property><property><name>yarn.resourcemanager.resource-tracker.address</name><value>master:8031</value></property><property><name>yarn.resourcemanager.admin.address</name><value>master:8033</value></property><property><name>yarn.resourcemanager.webapp.address</name><value>master:8088</value></property></configuration>

8. Copy Hadoop among hosts

scp -r /usr/ hadoop-2.7.4 slave1:/usrscp -r /usr/ hadoop-2.7.4 slave2:/usrscp -r /usr/ hadoop-2.7.4 slave3:/usr

9. Configure environment variables for Hadoop of each host

Open the configuration file:

vi /etc/profile

Edit the content:

export HADOOP_HOME=/usr/hadoop-2.7.4export PATH=$HADOOP_HOME/bin:$HADOOP_HOME/sbin:$PATHexport HADOOP_LOG_DIR=/usr/hadoop-2.7.4/logsexport YARN_LOG_DIR=$HADOOP_LOG_DIR

Implement the configuration file:

source /etc/profile

Start Hadoop

1. Format namenode

cd /usr/hadoop-2.7.4/sbinhdfs namenode -format

2. Start

cd /usr/hadoop-2.7.4/sbinstart-all.sh

3. Check processes

If processes on master contain ResourceManager, SecondaryNameNode and NameNode, Hadoop starts successfully. For example:

2212 ResourceManager2484 Jps1917 NameNode2078 SecondaryNameNode

If processes on each slave contain DataNode and NodeManager, Hadoop starts successfully. For example:

17153 DataNode17334 Jps17241 NodeManager

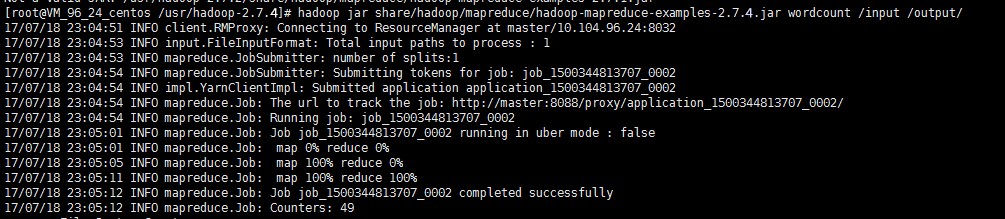

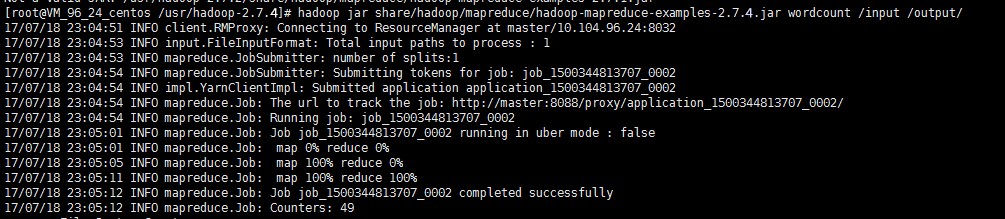

Running wordcount

The wordcount built in Hadoop can be called directly. After Hadoop starts, use the following command to work with files in HDFS:

hadoop fs -mkdir inputhadoop fs -put input.txt /inputhadoop jar share/hadoop/mapreduce/hadoop-mapreduce-examples-2.7.4.jar wordcount /input /output/

View output directory

hadoop fs -ls /output

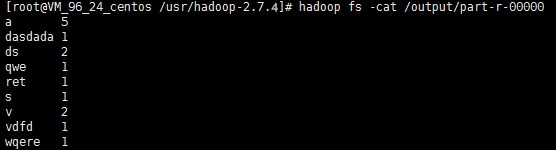

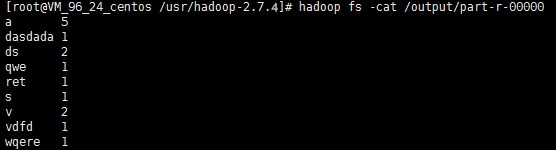

View output result

hadoop fs -cat /output/part-r-00000

Note:

For more information on how to run Hadoop in stand-alone and pseudo-distributed modes, see Get Started with Hadoop on the official website.

Ya

Ya

Tidak

Tidak

Apakah halaman ini membantu?